Last month, I was thrilled to join members of the nonprofit and tech community at Cool/Scary AI Sh*t DC. Ben Childers, the organizer of the event, wanted to bring together those with questions and curiosities about AI with those who can help guide and provide insight about AI tools.

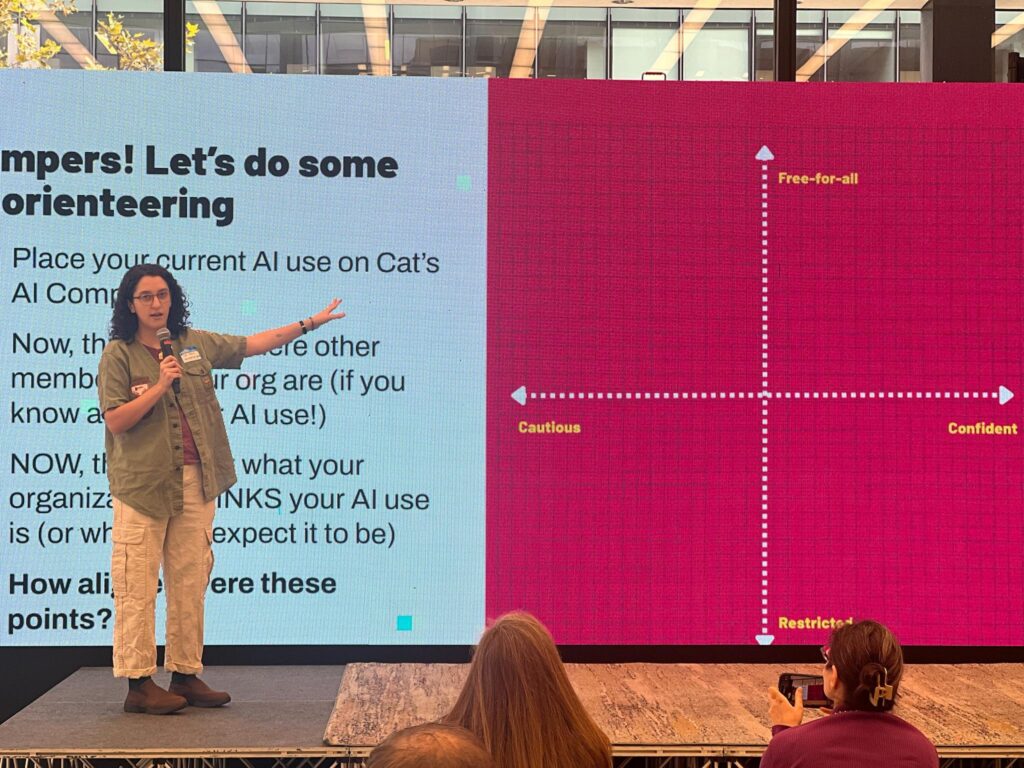

My session, “Help! I’m lost in the AI wilderness: Building an AI policy for responsible, productive team use” helped pull back the curtain on how to approach building a responsible internal AI use policy for an office team. After the session, I had great conversations with attendees that helped elaborate what I already expected to be true: despite (and in truth, also because of) the reservations people have around AI, organizations and their teams are seeking practical frameworks for navigating AI.

Part of my presentation focused on how responsible and ethical guidelines need to be included in a use policy, especially if we want to see AI regulation happen in state and federal policy. Innovation should not outpace integrity; in fact, AI use without integrity shouldn’t be seen as innovative at all.

My presentation was grounded in the key findings of our comprehensive AI white paper, Navigating the AI Wilderness. This guide is designed specifically for advocacy professionals who need a clear map and compass to define the purpose and scope of their AI use as a team, and establish ethical and responsible guardrails. If you’re looking to create robust, realistic AI guidelines for your team, I encourage you to check out the full paper here.

Though navigating AI can seem daunting, refusing to understand it will only ensure that we, and the communities we serve, will get left behind. I left the event inspired by the attendees who were newly motivated to approach the world of AI with curiosity and care.